Robotic Process Automation (RPA) is a software technology that enables computer software (robots) to emulate human interactions when interacting with digital systems and software. RPA focusses primarily on mundane and repetitive tasks to be automated so that corporate talent can focus on higher value delivery (Blueprint, 2020). RPA has a lot of potential in guiding businesses in their digital transformation, but just what aspects do managers need to focus on when implementing RPA into their business?

When considering RPA deployment, one of the key areas that needs to be understood is, what processes are RPA-friendly (Simpson-Grange, 2021). Another means of understanding processes and their suitability to an RPA process is from a data input perspective, the process complexity, process stability, and the involvement of humans (Vinutha, 2019). To ensure that you are getting the best from your RPA experience, and making the best business case possible to your executives, it is useful to define business processes in which RPA could have a bigger impact.

According to (Behrens, 2014), Processes should have the following five characteristics in order to be well-suited for RPA projects: (1) the process requires access to multiple systems, (2) the process is prone to human error, (3) the process can be broken into unambiguous rules, (4) the process, once started, needs limited human intervention and (5) the process should require limited exception handling.

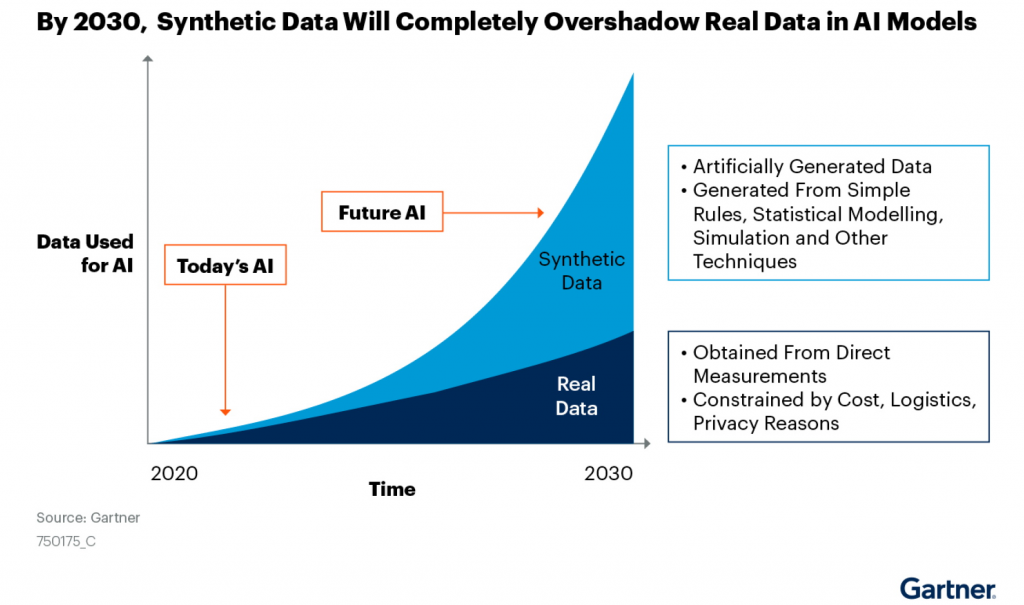

Processes that will deliver notable business benefits should consider using RPA. RPA should enable a business to provide a higher business value, generate meaningful cost benefits, and be aligned with company goals (Vinutha, 2019). Companies should first automate smaller processes. Once these smaller successes in robotic process automation are made, the company can then adopt automation for harder tasks when they are more familiar with RPA software and are better positioned to leverage it for optimising its enterprise systems (CiGen, 2020). The best tasks to automate using robotic process automation are those that are data-driven, can be standardised, and controlled through rules, thus occurring consistently in the same manner every time (CiGen, 2020). RPA can be used for fairly straightforward tasks like copying and pasting data, or typesetting, all the way up to more complex tasks like identifying fraud, or accounting payments (CiGen, 2020).

With RPA, organizations can automate the whole data input process for an ERP, from data collection, through recording, updating, manipulating, and validating data (Meijer, 2019). When choosing the right processes for an organizations RPA project, the objective is to find characteristics that produce better results faster. Once you define the criteria for RPA, as well as your goals on the smaller scale, then it is easy to build a systematic framework that can be followed to evaluate each process that is a candidate for automation (Meijer, 2019).

Sources:

Simpson-Grange, A. (2021). Robot Process Automation, Pt2 – What is RPA? [online] AMY SIMPSON-GRANGE – BLOG. Available at: https://amysimpsongrange.com/2021/06/15/rpa-pt2-what-is-rpa/ [Accessed 9 Oct. 2022].

Vinutha. (2019). Handy tips for choosing the right processes for RPA. [online] Nalashaa Available at: https://www.nalashaa.com/tips-to-choose-right-rpa-processes/ [Accessed 9 Oct. 2022].

CiGen. (2020). 5 Factors to Choosing the Right Business Processes to Automate. [online] Available at: https://www.cigen.com.au/five-factors-choosing-right-business-process-automate/ [Accessed 9 Oct. 2022].

Behrens, K. (2014). Five Characteristics of Business Processes That Are Perfect for RPA. [online] UiPath. Available at: https://www.uipath.com/blog/rpa/five-characteristics-of-business-processes-that-are-perfect-for-rpa [Accessed 9 Oct. 2022].

Blueprint. (2020). How to Select the Right Processes for RPA: Define a Criteria. [online] www.blueprintsys.com. Available at: https://www.blueprintsys.com/blog/rpa/select-right-processes-for-rpa [Accessed 9 Oct. 2022].

Meijer, R. (2019). 8 COMMON BUSINESS PROCESSES YOU CAN AUTOMATE WITH RPA. [online] Roboyo. Available at: https://roboyo.global/blog/8-common-business-processes-you-can-automate-with-rpa/ [Accessed 9 Oct. 2022].